Kubernetes Cluster API

Cluster API is a Kubernetes sub-project focused on providing declarative APIs and tooling to simplify provisioning, upgrading, and operating multiple Kubernetes clusters.

Started by the Kubernetes Special Interest Group (SIG) Cluster Lifecycle, the Cluster API project uses Kubernetes-style APIs and patterns to automate cluster lifecycle management for platform operators. The supporting infrastructure, like virtual machines, networks, load balancers, and VPCs, as well as the Kubernetes cluster configuration are all defined in the same way that application developers operate deploying and managing their workloads. This enables consistent and repeatable cluster deployments across a wide variety of infrastructure environments.

Getting started

Using Cluster API v1alpha3? See the legacy documentation.

Why build Cluster API?

Kubernetes is a complex system that relies on several components being configured correctly to have a working cluster. Recognizing this as a potential stumbling block for users, the community focused on simplifying the bootstrapping process. Today, over 100 Kubernetes distributions and installers have been created, each with different default configurations for clusters and supported infrastructure providers. SIG Cluster Lifecycle saw a need for a single tool to address a set of common overlapping installation concerns and started kubeadm.

Kubeadm was designed as a focused tool for bootstrapping a best-practices Kubernetes cluster. The core tenet behind the kubeadm project was to create a tool that other installers can leverage and ultimately alleviate the amount of configuration that an individual installer needed to maintain. Since it began, kubeadm has become the underlying bootstrapping tool for several other applications, including Kubespray, Minikube, kind, etc.

However, while kubeadm and other bootstrap providers reduce installation complexity, they don’t address how to manage a cluster day-to-day or a Kubernetes environment long term. You are still faced with several questions when setting up a production environment, including:

- How can I consistently provision machines, load balancers, VPC, etc., across multiple infrastructure providers and locations?

- How can I automate cluster lifecycle management, including things like upgrades and cluster deletion?

- How can I scale these processes to manage any number of clusters?

SIG Cluster Lifecycle began the Cluster API project as a way to address these gaps by building declarative, Kubernetes-style APIs, that automate cluster creation, configuration, and management. Using this model, Cluster API can also be extended to support any infrastructure provider (AWS, Azure, vSphere, etc.) or bootstrap provider (kubeadm is default) you need. See the growing list of available providers.

Goals

- To manage the lifecycle (create, scale, upgrade, destroy) of Kubernetes-conformant clusters using a declarative API.

- To work in different environments, both on-premises and in the cloud.

- To define common operations, provide a default implementation, and provide the ability to swap out implementations for alternative ones.

- To reuse and integrate existing ecosystem components rather than duplicating their functionality (e.g. node-problem-detector, cluster autoscaler, SIG-Multi-cluster).

- To provide a transition path for Kubernetes lifecycle products to adopt Cluster API incrementally. Specifically, existing cluster lifecycle management tools should be able to adopt Cluster API in a staged manner, over the course of multiple releases, or even adopting a subset of Cluster API.

Non-goals

- To add these APIs to Kubernetes core (kubernetes/kubernetes).

- This API should live in a namespace outside the core and follow the best practices defined by api-reviewers, but is not subject to core-api constraints.

- To manage the lifecycle of infrastructure unrelated to the running of Kubernetes-conformant clusters.

- To force all Kubernetes lifecycle products (kops, kubespray, GKE, AKS, EKS, IKS etc.) to support or use these APIs.

- To manage non-Cluster API provisioned Kubernetes-conformant clusters.

- To manage a single cluster spanning multiple infrastructure providers.

- To configure a machine at any time other than create or upgrade.

- To duplicate functionality that exists or is coming to other tooling, e.g., updating kubelet configuration (c.f. dynamic kubelet configuration), or updating apiserver, controller-manager, scheduler configuration (c.f. component-config effort) after the cluster is deployed.

🤗 Community, discussion, contribution, and support

Cluster API is developed in the open, and is constantly being improved by our users, contributors, and maintainers. It is because of you that we are able to automate cluster lifecycle management for the community. Join us!

If you have questions or want to get the latest project news, you can connect with us in the following ways:

- Chat with us on the Kubernetes Slack in the #cluster-api channel

- Subscribe to the SIG Cluster Lifecycle Google Group for access to documents and calendars

- Participate in the conversations on Kubernetes Discuss

- Join our Cluster API working group sessions where we share the latest project news, demos, answer questions, and triage issues

- Weekly on Wednesdays @ 10:00 PT on Zoom

- Previous meetings: [ notes | recordings ]

Pull Requests and feedback on issues are very welcome! See the issue tracker if you’re unsure where to start, especially the Good first issue and Help wanted tags, and also feel free to reach out to discuss.

See also our contributor guide and the Kubernetes community page for more details on how to get involved.

Code of conduct

Participation in the Kubernetes community is governed by the Kubernetes Code of Conduct.

Quick Start

In this tutorial we’ll cover the basics of how to use Cluster API to create one or more Kubernetes clusters.

Installation

Common Prerequisites

Install and/or configure a Kubernetes cluster

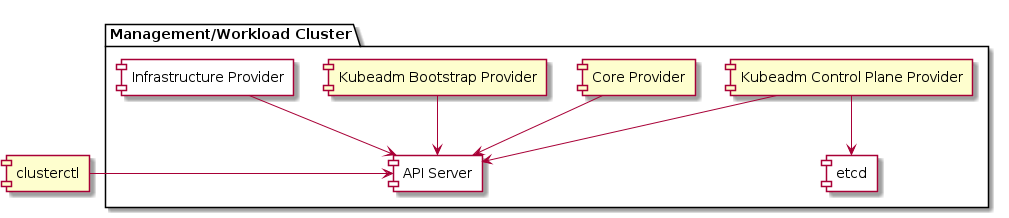

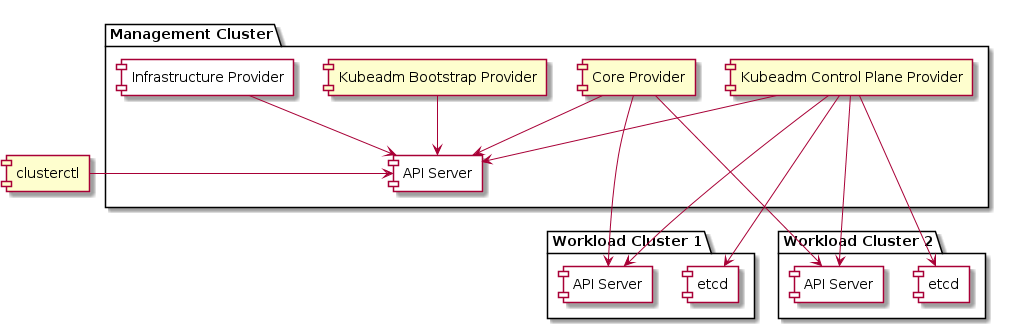

Cluster API requires an existing Kubernetes cluster accessible via kubectl. During the installation process the Kubernetes cluster will be transformed into a management cluster by installing the Cluster API provider components, so it is recommended to keep it separated from any application workload.

It is a common practice to create a temporary, local bootstrap cluster which is then used to provision a target management cluster on the selected infrastructure provider.

Choose one of the options below:

-

Existing Management Cluster

For production use-cases a “real” Kubernetes cluster should be used with appropriate backup and DR policies and procedures in place. The Kubernetes cluster must be at least v1.19.1.

export KUBECONFIG=<...> -

Kind

kind can be used for creating a local Kubernetes cluster for development environments or for the creation of a temporary bootstrap cluster used to provision a target management cluster on the selected infrastructure provider.

The installation procedure depends on the version of kind; if you are planning to use the Docker infrastructure provider, please follow the additional instructions in the dedicated tab:

Create the kind cluster:

kind create clusterTest to ensure the local kind cluster is ready:

kubectl cluster-infoRun the following command to create a kind config file for allowing the Docker provider to access Docker on the host:

cat > kind-cluster-with-extramounts.yaml <<EOF kind: Cluster apiVersion: kind.x-k8s.io/v1alpha4 nodes: - role: control-plane extraMounts: - hostPath: /var/run/docker.sock containerPath: /var/run/docker.sock EOFThen follow the instruction for your kind version using

kind create cluster --config kind-cluster-with-extramounts.yamlto create the management cluster using the above file.

Install clusterctl

The clusterctl CLI tool handles the lifecycle of a Cluster API management cluster.

Install clusterctl binary with curl on linux

Download the latest release; on linux, type:

curl -L https://github.com/kubernetes-sigs/cluster-api/releases/download/v0.4.8/clusterctl-linux-amd64 -o clusterctl

Make the clusterctl binary executable.

chmod +x ./clusterctl

Move the binary in to your PATH.

sudo mv ./clusterctl /usr/local/bin/clusterctl

Test to ensure the version you installed is up-to-date:

clusterctl version

Install clusterctl binary with curl on macOS

Download the latest release; on macOS, type:

curl -L https://github.com/kubernetes-sigs/cluster-api/releases/download/v0.4.8/clusterctl-darwin-amd64 -o clusterctl

Or if your Mac has an M1 CPU (”Apple Silicon”):

curl -L https://github.com/kubernetes-sigs/cluster-api/releases/download/v0.4.8/clusterctl-darwin-arm64 -o clusterctl

Make the clusterctl binary executable.

chmod +x ./clusterctl

Move the binary in to your PATH.

sudo mv ./clusterctl /usr/local/bin/clusterctl

Test to ensure the version you installed is up-to-date:

clusterctl version

Install clusterctl with homebrew on macOS and linux

Install the latest release using homebrew:

brew install clusterctl

Test to ensure the version you installed is up-to-date:

clusterctl version

Initialize the management cluster

Now that we’ve got clusterctl installed and all the prerequisites in place, let’s transform the Kubernetes cluster

into a management cluster by using clusterctl init.

The command accepts as input a list of providers to install; when executed for the first time, clusterctl init

automatically adds to the list the cluster-api core provider, and if unspecified, it also adds the kubeadm bootstrap

and kubeadm control-plane providers.

Initialization for common providers

Depending on the infrastructure provider you are planning to use, some additional prerequisites should be satisfied before getting started with Cluster API. See below for the expected settings for common providers.

Download the latest binary of clusterawsadm from the AWS provider releases and make sure to place it in your path.

The clusterawsadm command line utility assists with identity and access management (IAM) for Cluster API Provider AWS.

export AWS_REGION=us-east-1 # This is used to help encode your environment variables

export AWS_ACCESS_KEY_ID=<your-access-key>

export AWS_SECRET_ACCESS_KEY=<your-secret-access-key>

export AWS_SESSION_TOKEN=<session-token> # If you are using Multi-Factor Auth.

# The clusterawsadm utility takes the credentials that you set as environment

# variables and uses them to create a CloudFormation stack in your AWS account

# with the correct IAM resources.

clusterawsadm bootstrap iam create-cloudformation-stack

# Create the base64 encoded credentials using clusterawsadm.

# This command uses your environment variables and encodes

# them in a value to be stored in a Kubernetes Secret.

export AWS_B64ENCODED_CREDENTIALS=$(clusterawsadm bootstrap credentials encode-as-profile)

# Finally, initialize the management cluster

clusterctl init --infrastructure aws

See the AWS provider prerequisites document for more details.

For more information about authorization, AAD, or requirements for Azure, visit the Azure provider prerequisites document.

export AZURE_SUBSCRIPTION_ID="<SubscriptionId>"

# Create an Azure Service Principal and paste the output here

export AZURE_TENANT_ID="<Tenant>"

export AZURE_CLIENT_ID="<AppId>"

export AZURE_CLIENT_SECRET="<Password>"

# Base64 encode the variables

export AZURE_SUBSCRIPTION_ID_B64="$(echo -n "$AZURE_SUBSCRIPTION_ID" | base64 | tr -d '\n')"

export AZURE_TENANT_ID_B64="$(echo -n "$AZURE_TENANT_ID" | base64 | tr -d '\n')"

export AZURE_CLIENT_ID_B64="$(echo -n "$AZURE_CLIENT_ID" | base64 | tr -d '\n')"

export AZURE_CLIENT_SECRET_B64="$(echo -n "$AZURE_CLIENT_SECRET" | base64 | tr -d '\n')"

# Settings needed for AzureClusterIdentity used by the AzureCluster

export AZURE_CLUSTER_IDENTITY_SECRET_NAME="cluster-identity-secret"

export CLUSTER_IDENTITY_NAME="cluster-identity"

export AZURE_CLUSTER_IDENTITY_SECRET_NAMESPACE="default"

# Create a secret to include the password of the Service Principal identity created in Azure

# This secret will be referenced by the AzureClusterIdentity used by the AzureCluster

kubectl create secret generic "${AZURE_CLUSTER_IDENTITY_SECRET_NAME}" --from-literal=clientSecret="${AZURE_CLIENT_SECRET}"

# Finally, initialize the management cluster

clusterctl init --infrastructure azure

export DIGITALOCEAN_ACCESS_TOKEN=<your-access-token>

export DO_B64ENCODED_CREDENTIALS="$(echo -n "${DIGITALOCEAN_ACCESS_TOKEN}" | base64 | tr -d '\n')"

# Initialize the management cluster

clusterctl init --infrastructure digitalocean

The Docker provider does not require additional prerequisites. You can run:

clusterctl init --infrastructure docker

# Create the base64 encoded credentials by catting your credentials json.

# This command uses your environment variables and encodes

# them in a value to be stored in a Kubernetes Secret.

export GCP_B64ENCODED_CREDENTIALS=$( cat /path/to/gcp-credentials.json | base64 | tr -d '\n' )

# Finally, initialize the management cluster

clusterctl init --infrastructure gcp

# The username used to access the remote vSphere endpoint

export VSPHERE_USERNAME="vi-admin@vsphere.local"

# The password used to access the remote vSphere endpoint

# You may want to set this in ~/.cluster-api/clusterctl.yaml so your password is not in

# bash history

export VSPHERE_PASSWORD="admin!23"

# Finally, initialize the management cluster

clusterctl init --infrastructure vsphere

For more information about prerequisites, credentials management, or permissions for vSphere, see the vSphere project.

# Initialize the management cluster

clusterctl init --infrastructure openstack

Please visit the Metal3 project.

In order to initialize the Packet Provider you have to expose the environment

variable PACKET_API_KEY. This variable is used to authorize the infrastructure

provider manager against the Packet API. You can retrieve your token directly

from the Packet Portal.

export PACKET_API_KEY="34ts3g4s5g45gd45dhdh"

clusterctl init --infrastructure packet

The output of clusterctl init is similar to this:

Fetching providers

Installing cert-manager Version="v1.5.3"

Waiting for cert-manager to be available...

Installing Provider="cluster-api" Version="v0.4.0" TargetNamespace="capi-system"

Installing Provider="bootstrap-kubeadm" Version="v0.4.0" TargetNamespace="capi-kubeadm-bootstrap-system"

Installing Provider="control-plane-kubeadm" Version="v0.4.0" TargetNamespace="capi-kubeadm-control-plane-system"

Installing Provider="infrastructure-docker" Version="v0.4.0" TargetNamespace="capd-system"

Your management cluster has been initialized successfully!

You can now create your first workload cluster by running the following:

clusterctl generate cluster [name] --kubernetes-version [version] | kubectl apply -f -

Create your first workload cluster

Once the management cluster is ready, you can create your first workload cluster.

Preparing the workload cluster configuration

The clusterctl generate cluster command returns a YAML template for creating a workload cluster.

Required configuration for common providers

Depending on the infrastructure provider you are planning to use, some additional prerequisites should be satisfied before configuring a cluster with Cluster API. Instructions are provided for common providers below.

Otherwise, you can look at the clusterctl generate cluster command documentation for details about how to

discover the list of variables required by a cluster templates.

export AWS_REGION=us-east-1

export AWS_SSH_KEY_NAME=default

# Select instance types

export AWS_CONTROL_PLANE_MACHINE_TYPE=t3.large

export AWS_NODE_MACHINE_TYPE=t3.large

See the AWS provider prerequisites document for more details.

# Name of the Azure datacenter location. Change this value to your desired location.

export AZURE_LOCATION="centralus"

# Select VM types.

export AZURE_CONTROL_PLANE_MACHINE_TYPE="Standard_D2s_v3"

export AZURE_NODE_MACHINE_TYPE="Standard_D2s_v3"

A ClusterAPI compatible image must be available in your DigitalOcean account. For instructions on how to build a compatible image see image-builder.

export DO_REGION=nyc1

export DO_SSH_KEY_FINGERPRINT=<your-ssh-key-fingerprint>

export DO_CONTROL_PLANE_MACHINE_TYPE=s-2vcpu-2gb

export DO_CONTROL_PLANE_MACHINE_IMAGE=<your-capi-image-id>

export DO_NODE_MACHINE_TYPE=s-2vcpu-2gb

export DO_NODE_MACHINE_IMAGE==<your-capi-image-id>

The Docker provider does not require additional configurations for cluster templates.

However, if you require special network settings you can set the following environment variables:

# The list of service CIDR, default ["10.128.0.0/12"]

export SERVICE_CIDR=["10.96.0.0/12"]

# The list of pod CIDR, default ["192.168.0.0/16"]

export POD_CIDR=["192.168.0.0/16"]

# The service domain, default "cluster.local"

export SERVICE_DOMAIN="k8s.test"

# Name of the GCP datacenter location. Change this value to your desired location

export GCP_REGION="<GCP_REGION>"

export GCP_PROJECT="<GCP_PROJECT>"

# Make sure to use same kubernetes version here as building the GCE image

export KUBERNETES_VERSION=1.20.9

export GCP_CONTROL_PLANE_MACHINE_TYPE=n1-standard-2

export GCP_NODE_MACHINE_TYPE=n1-standard-2

export GCP_NETWORK_NAME=<GCP_NETWORK_NAME or default>

export CLUSTER_NAME="<CLUSTER_NAME>"

See the GCP provider for more information.

It is required to use an official CAPV machine images for your vSphere VM templates. See uploading CAPV machine images for instructions on how to do this.

# The vCenter server IP or FQDN

export VSPHERE_SERVER="10.0.0.1"

# The vSphere datacenter to deploy the management cluster on

export VSPHERE_DATACENTER="SDDC-Datacenter"

# The vSphere datastore to deploy the management cluster on

export VSPHERE_DATASTORE="vsanDatastore"

# The VM network to deploy the management cluster on

export VSPHERE_NETWORK="VM Network"

# The vSphere resource pool for your VMs

export VSPHERE_RESOURCE_POOL="*/Resources"

# The VM folder for your VMs. Set to "" to use the root vSphere folder

export VSPHERE_FOLDER="vm"

# The VM template to use for your VMs

export VSPHERE_TEMPLATE="ubuntu-1804-kube-v1.17.3"

# The VM template to use for the HAProxy load balancer of the management cluster

export VSPHERE_HAPROXY_TEMPLATE="capv-haproxy-v0.6.0-rc.2"

# The public ssh authorized key on all machines

export VSPHERE_SSH_AUTHORIZED_KEY="ssh-rsa AAAAB3N..."

clusterctl init --infrastructure vsphere

For more information about prerequisites, credentials management, or permissions for vSphere, see the vSphere getting started guide.

A ClusterAPI compatible image must be available in your OpenStack. For instructions on how to build a compatible image see image-builder. Depending on your OpenStack and underlying hypervisor the following options might be of interest:

To see all required OpenStack environment variables execute:

clusterctl generate cluster --infrastructure openstack --list-variables capi-quickstart

The following script can be used to export some of them:

wget https://raw.githubusercontent.com/kubernetes-sigs/cluster-api-provider-openstack/master/templates/env.rc -O /tmp/env.rc

source /tmp/env.rc <path/to/clouds.yaml> <cloud>

Apart from the script, the following OpenStack environment variables are required.

# The list of nameservers for OpenStack Subnet being created.

# Set this value when you need create a new network/subnet while the access through DNS is required.

export OPENSTACK_DNS_NAMESERVERS=<dns nameserver>

# FailureDomain is the failure domain the machine will be created in.

export OPENSTACK_FAILURE_DOMAIN=<availability zone name>

# The flavor reference for the flavor for your server instance.

export OPENSTACK_CONTROL_PLANE_MACHINE_FLAVOR=<flavor>

# The flavor reference for the flavor for your server instance.

export OPENSTACK_NODE_MACHINE_FLAVOR=<flavor>

# The name of the image to use for your server instance. If the RootVolume is specified, this will be ignored and use rootVolume directly.

export OPENSTACK_IMAGE_NAME=<image name>

# The SSH key pair name

export OPENSTACK_SSH_KEY_NAME=<ssh key pair name>

A full configuration reference can be found in configuration.md.

# The URL of the kernel to deploy.

export DEPLOY_KERNEL_URL="http://172.22.0.1:6180/images/ironic-python-agent.kernel"

# The URL of the ramdisk to deploy.

export DEPLOY_RAMDISK_URL="http://172.22.0.1:6180/images/ironic-python-agent.initramfs"

# The URL of the Ironic endpoint.

export IRONIC_URL="http://172.22.0.1:6385/v1/"

# The URL of the Ironic inspector endpoint.

export IRONIC_INSPECTOR_URL="http://172.22.0.1:5050/v1/"

# Do not use a dedicated CA certificate for Ironic API. Any value provided in this variable disables additional CA certificate validation.

# To provide a CA certificate, leave this variable unset. If unset, then IRONIC_CA_CERT_B64 must be set.

export IRONIC_NO_CA_CERT=true

# Disables basic authentication for Ironic API. Any value provided in this variable disables authentication.

# To enable authentication, leave this variable unset. If unset, then IRONIC_USERNAME and IRONIC_PASSWORD must be set.

export IRONIC_NO_BASIC_AUTH=true

# Disables basic authentication for Ironic inspector API. Any value provided in this variable disables authentication.

# To enable authentication, leave this variable unset. If unset, then IRONIC_INSPECTOR_USERNAME and IRONIC_INSPECTOR_PASSWORD must be set.

export IRONIC_INSPECTOR_NO_BASIC_AUTH=true

Please visit the Metal3 getting started guide for more details.

There are a couple of required environment variables that you have to expose in order to get a well tuned and function workload, they are all listed here:

# The project where your cluster will be placed to.

# You have to get out from Packet Portal if you do not have one already.

export PROJECT_ID="5yd4thd-5h35-5hwk-1111-125gjej40930"

# The facility where you want your cluster to be provisioned

export FACILITY="ewr1"

# The operatin system used to provision the device

export NODE_OS="ubuntu_18_04"

# The ssh key name you loaded in Packet Portal

export SSH_KEY="my-ssh"

export POD_CIDR="192.168.0.0/16"

export SERVICE_CIDR="172.26.0.0/16"

export CONTROLPLANE_NODE_TYPE="t1.small"

export WORKER_NODE_TYPE="t1.small"

Generating the cluster configuration

For the purpose of this tutorial, we’ll name our cluster capi-quickstart.

clusterctl generate cluster capi-quickstart \

--kubernetes-version v1.22.0 \

--control-plane-machine-count=3 \

--worker-machine-count=3 \

> capi-quickstart.yaml

clusterctl generate cluster capi-quickstart --flavor development \

--kubernetes-version v1.22.0 \

--control-plane-machine-count=3 \

--worker-machine-count=3 \

> capi-quickstart.yaml

This creates a YAML file named capi-quickstart.yaml with a predefined list of Cluster API objects; Cluster, Machines,

Machine Deployments, etc.

The file can be eventually modified using your editor of choice.

See clusterctl generate cluster for more details.

Apply the workload cluster

When ready, run the following command to apply the cluster manifest.

kubectl apply -f capi-quickstart.yaml

The output is similar to this:

cluster.cluster.x-k8s.io/capi-quickstart created

awscluster.infrastructure.cluster.x-k8s.io/capi-quickstart created

kubeadmcontrolplane.controlplane.cluster.x-k8s.io/capi-quickstart-control-plane created

awsmachinetemplate.infrastructure.cluster.x-k8s.io/capi-quickstart-control-plane created

machinedeployment.cluster.x-k8s.io/capi-quickstart-md-0 created

awsmachinetemplate.infrastructure.cluster.x-k8s.io/capi-quickstart-md-0 created

kubeadmconfigtemplate.bootstrap.cluster.x-k8s.io/capi-quickstart-md-0 created

Accessing the workload cluster

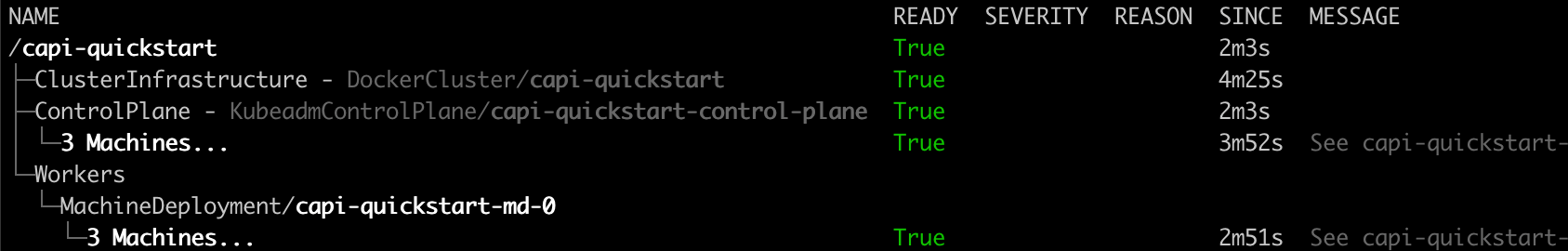

The cluster will now start provisioning. You can check status with:

kubectl get cluster

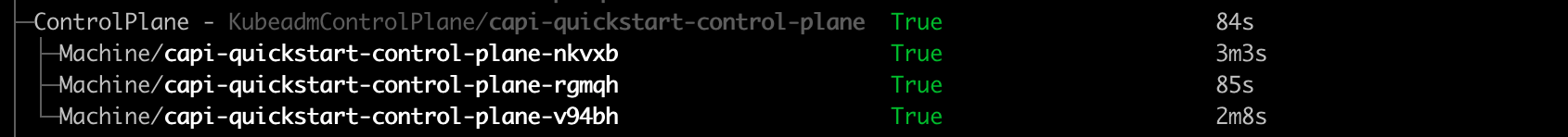

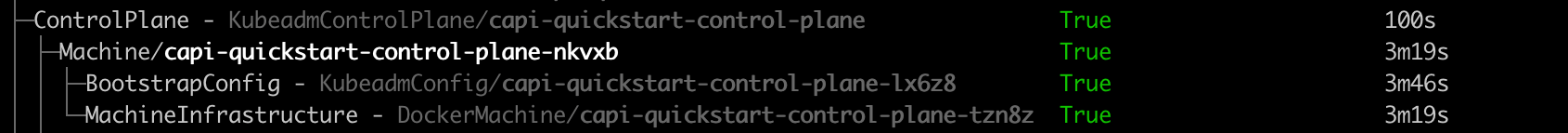

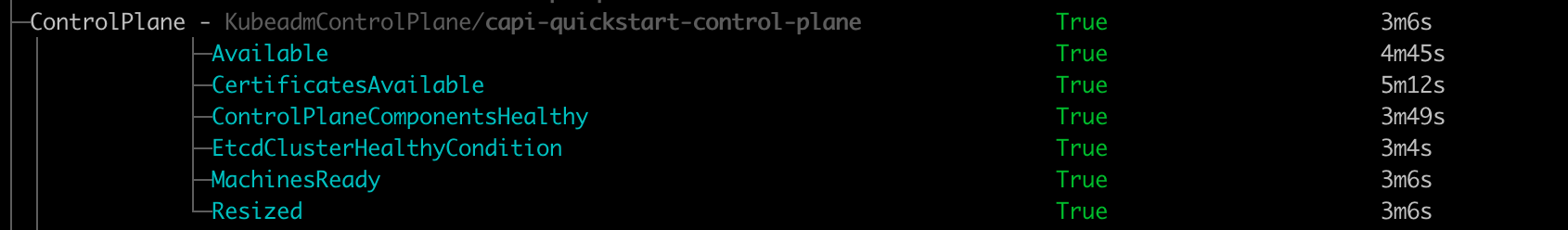

You can also get an “at glance” view of the cluster and its resources by running:

clusterctl describe cluster capi-quickstart

To verify the first control plane is up:

kubectl get kubeadmcontrolplane

You should see an output is similar to this:

NAME INITIALIZED API SERVER AVAILABLE VERSION REPLICAS READY UPDATED UNAVAILABLE

capi-quickstart-control-plane true v1.21.2 3 3 3

After the first control plane node is up and running, we can retrieve the workload cluster Kubeconfig:

clusterctl get kubeconfig capi-quickstart > capi-quickstart.kubeconfig

Deploy a CNI solution

Calico is used here as an example.

kubectl --kubeconfig=./capi-quickstart.kubeconfig \

apply -f https://docs.projectcalico.org/v3.20/manifests/calico.yaml

After a short while, our nodes should be running and in Ready state,

let’s check the status using kubectl get nodes:

kubectl --kubeconfig=./capi-quickstart.kubeconfig get nodes

Azure does not currently support Calico networking. As a workaround, it is recommended that Azure clusters use the Calico spec below that uses VXLAN.

kubectl --kubeconfig=./capi-quickstart.kubeconfig \

apply -f https://raw.githubusercontent.com/kubernetes-sigs/cluster-api-provider-azure/main/templates/addons/calico.yaml

After a short while, our nodes should be running and in Ready state,

let’s check the status using kubectl get nodes:

kubectl --kubeconfig=./capi-quickstart.kubeconfig get nodes

Clean Up

Delete workload cluster.

kubectl delete cluster capi-quickstart

Delete management cluster

kind delete cluster

Next steps

See the clusterctl documentation for more detail about clusterctl supported actions.

Concepts

Management cluster

A Kubernetes cluster that manages the lifecycle of Workload Clusters. A Management Cluster is also where one or more Infrastructure Providers run, and where resources such as Machines are stored.

Workload cluster

A Kubernetes cluster whose lifecycle is managed by a Management Cluster.

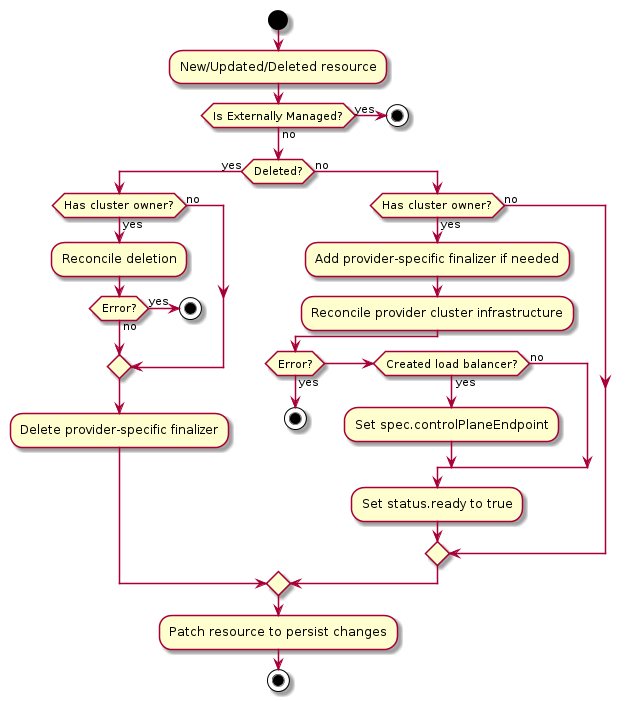

Infrastructure provider

A source of computational resources, such as compute and networking. For example, cloud Infrastructure Providers include AWS, Azure, and Google, and bare metal Infrastructure Providers include VMware, MAAS, and metal3.io.

When there is more than one way to obtain resources from the same Infrastructure Provider (such as AWS offering both EC2 and EKS), each way is referred to as a variant.

Bootstrap provider

The Bootstrap Provider is responsible for:

- Generating the cluster certificates, if not otherwise specified

- Initializing the control plane, and gating the creation of other nodes until it is complete

- Joining control plane and worker nodes to the cluster

Control plane

The control plane is a set of components that serve the Kubernetes API and continuously reconcile desired state using control loops.

-

Machine-based control planes are the most common type. Dedicated machines are provisioned, running static pods for components such as kube-apiserver, kube-controller-manager and kube-scheduler.

-

Pod-based deployments require an external hosting cluster. The control plane components are deployed using standard Deployment and StatefulSet objects and the API is exposed using a Service.

-

External control planes are offered and controlled by some system other than Cluster API, such as GKE, AKS, EKS, or IKS.

As of v1alpha2, Machine-Based is the only control plane type that Cluster API supports.

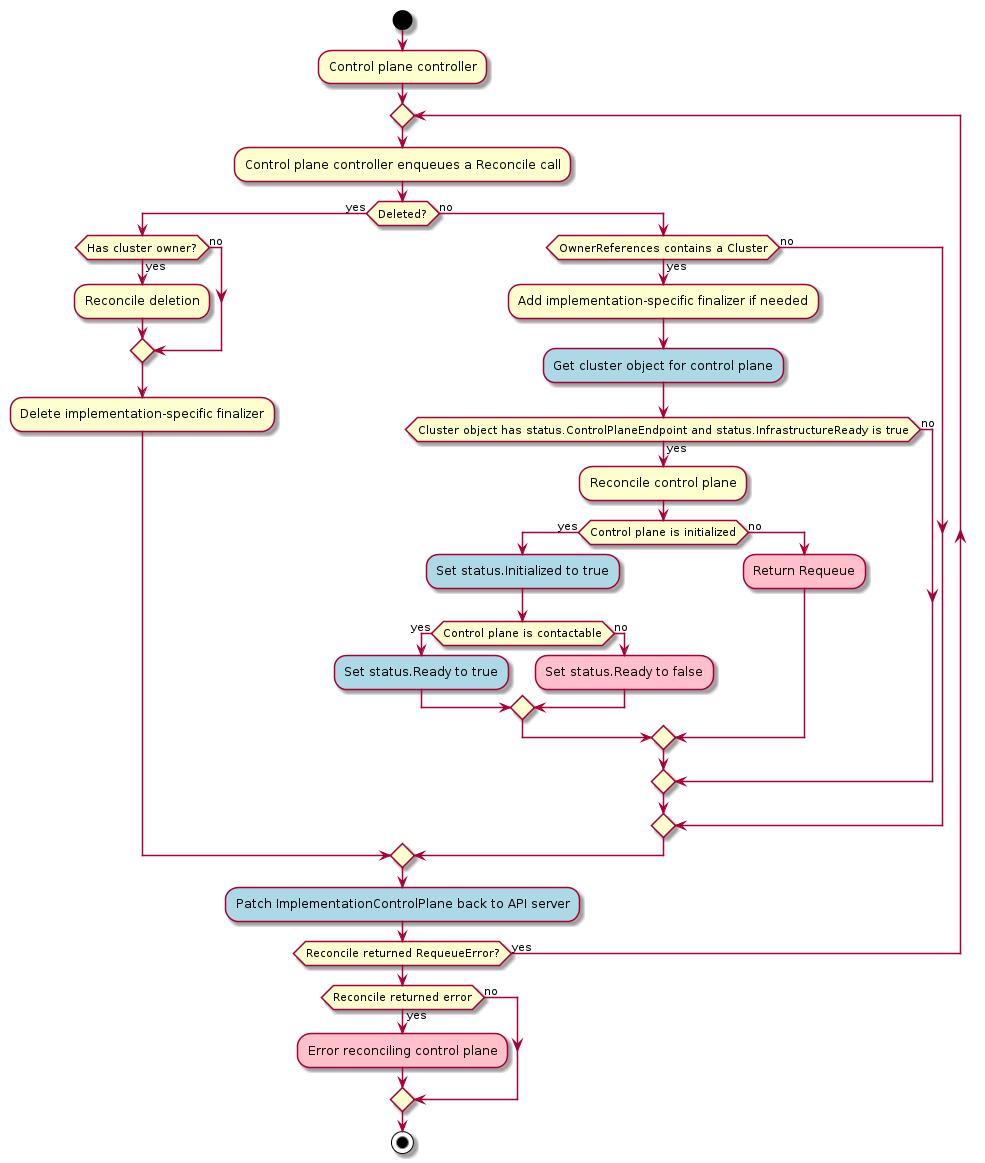

The default provider uses kubeadm to bootstrap the control plane. As of v1alpha3, it exposes the configuration via the KubeadmControlPlane object. The controller, capi-kubeadm-control-plane-controller-manager, can then create Machine and BootstrapConfig objects based on the requested replicas in the KubeadmControlPlane object.

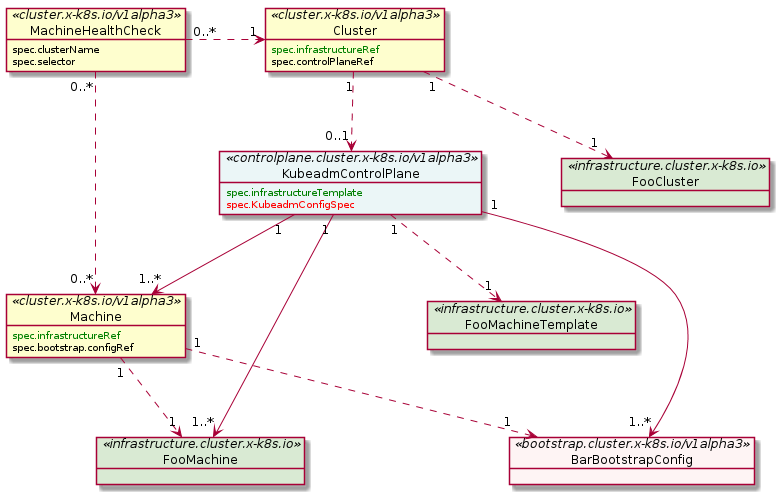

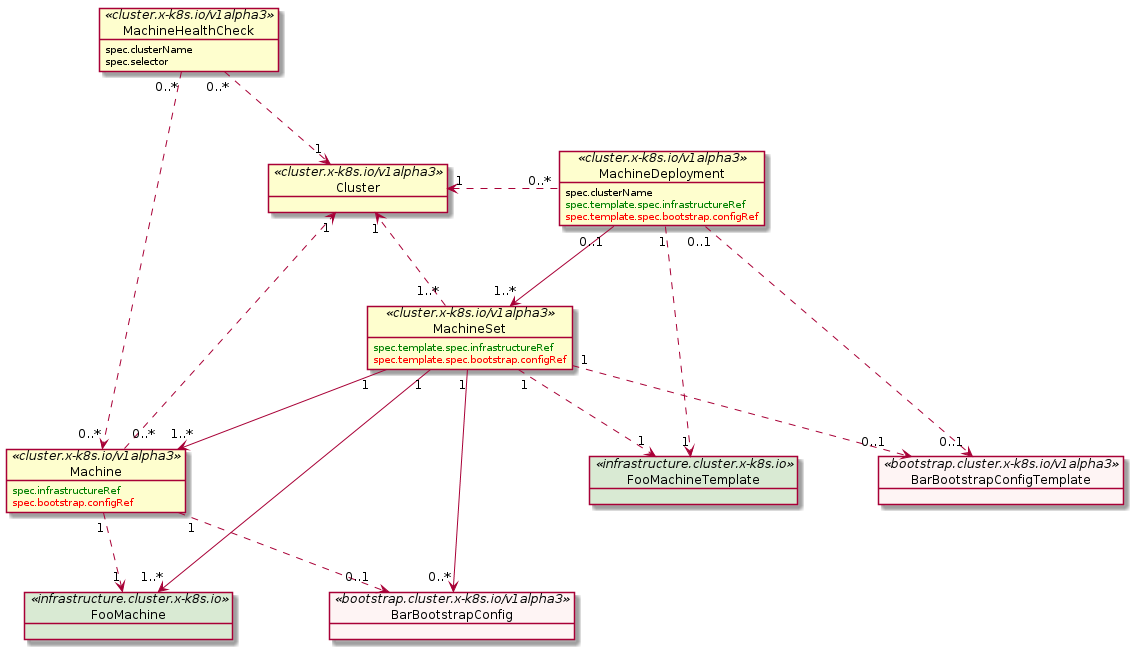

Custom Resource Definitions (CRDs)

A CustomResourceDefinition is a built-in resource that lets you extend the Kubernetes API. Each CustomResourceDefinition represents a customization of a Kubernetes installation. The Cluster API provides and relies on several CustomResourceDefinitions:

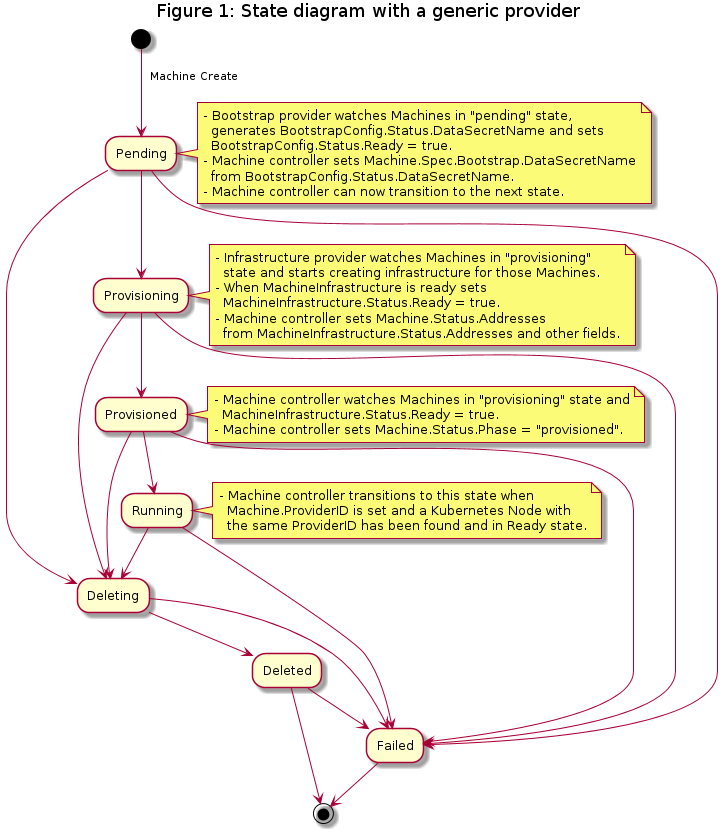

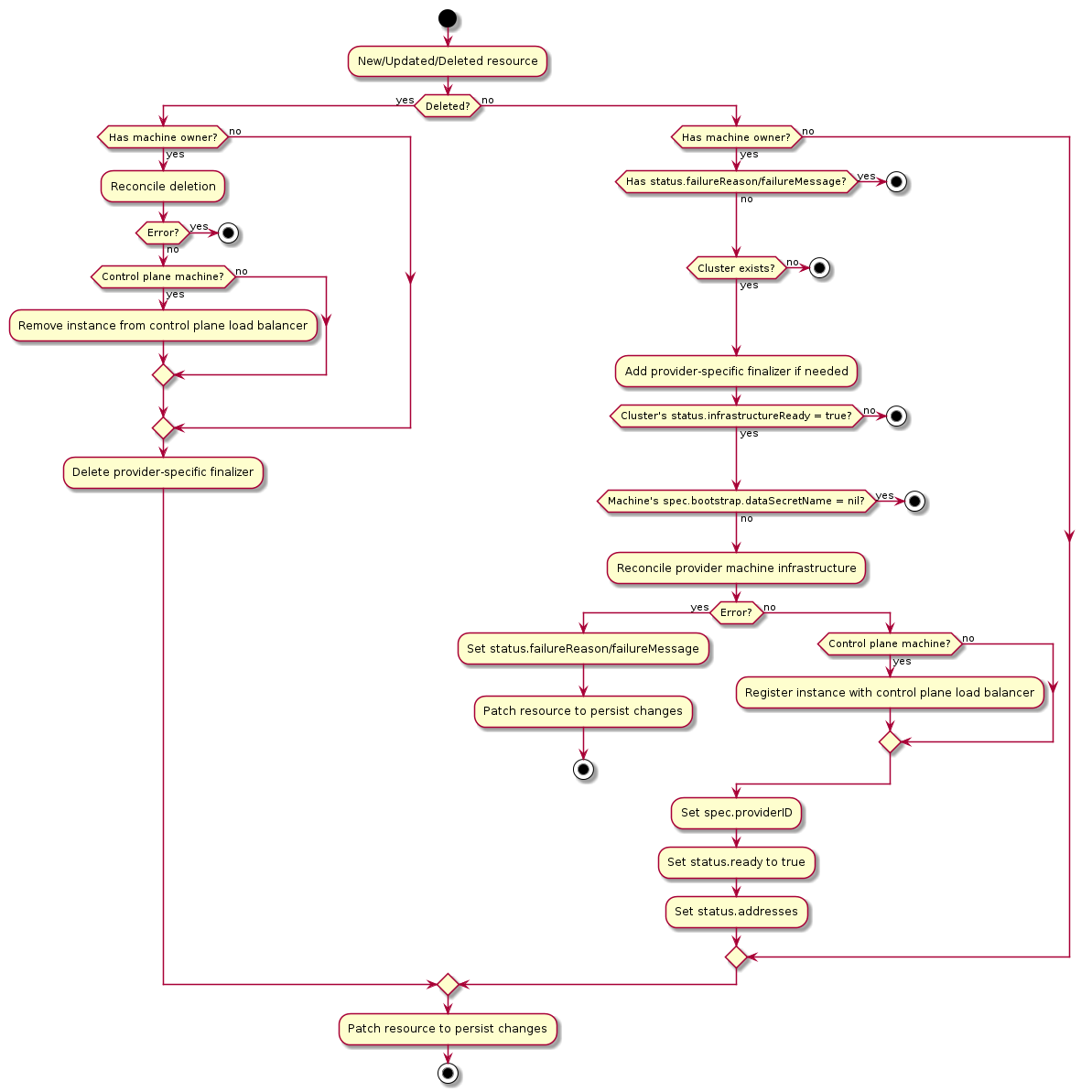

Machine

A “Machine” is the declarative spec for an infrastructure component hosting a Kubernetes Node (for example, a VM). If a new Machine object is created, a provider-specific controller will provision and install a new host to register as a new Node matching the Machine spec. If the Machine’s spec is updated, the controller replaces the host with a new one matching the updated spec. If a Machine object is deleted, its underlying infrastructure and corresponding Node will be deleted by the controller.

Common fields such as Kubernetes version are modeled as fields on the Machine’s spec. Any information that is provider-specific is part of the InfrastructureRef and is not portable between different providers.

Machine Immutability (In-place Upgrade vs. Replace)

From the perspective of Cluster API, all Machines are immutable: once they are created, they are never updated (except for labels, annotations and status), only deleted.

For this reason, MachineDeployments are preferable. MachineDeployments handle changes to machines by replacing them, in the same way core Deployments handle changes to Pod specifications.

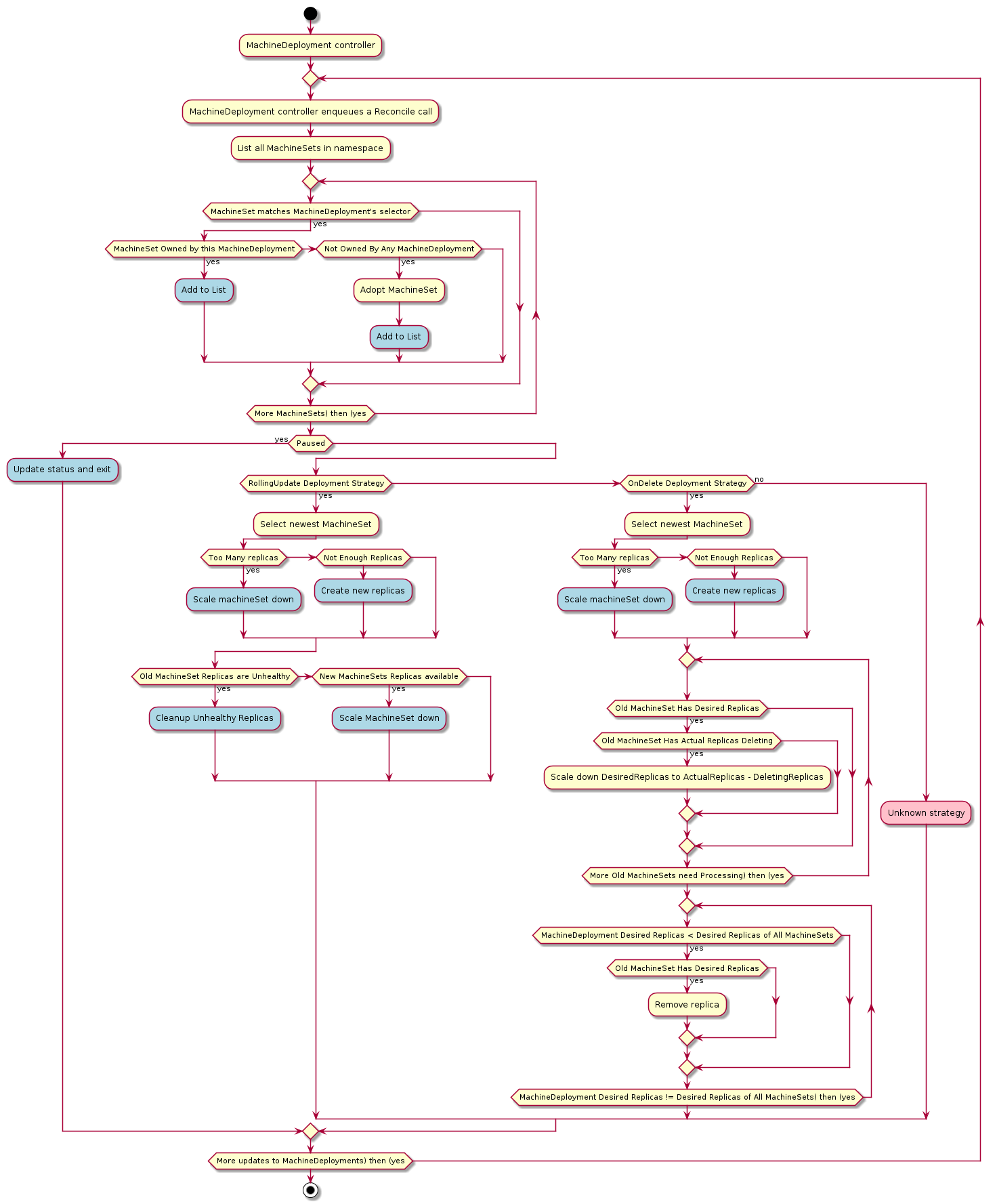

MachineDeployment

A MachineDeployment provides declarative updates for Machines and MachineSets.

A MachineDeployment works similarly to a core Kubernetes Deployment. A MachineDeployment reconciles changes to a Machine spec by rolling out changes to 2 MachineSets, the old and the newly updated.

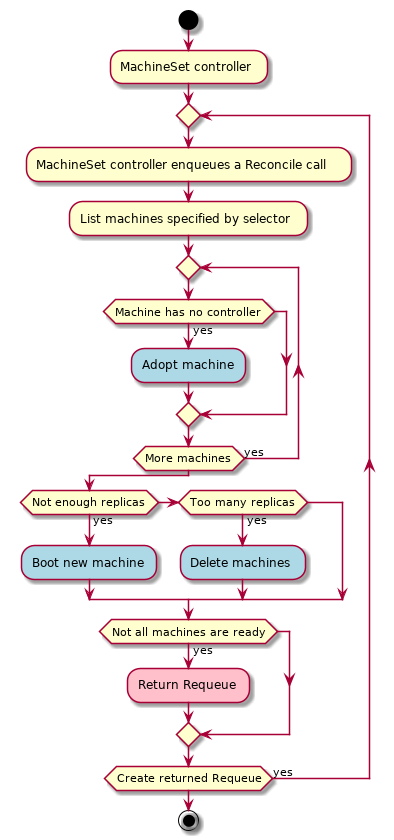

MachineSet

A MachineSet’s purpose is to maintain a stable set of Machines running at any given time.

A MachineSet works similarly to a core Kubernetes ReplicaSet. MachineSets are not meant to be used directly, but are the mechanism MachineDeployments use to reconcile desired state.

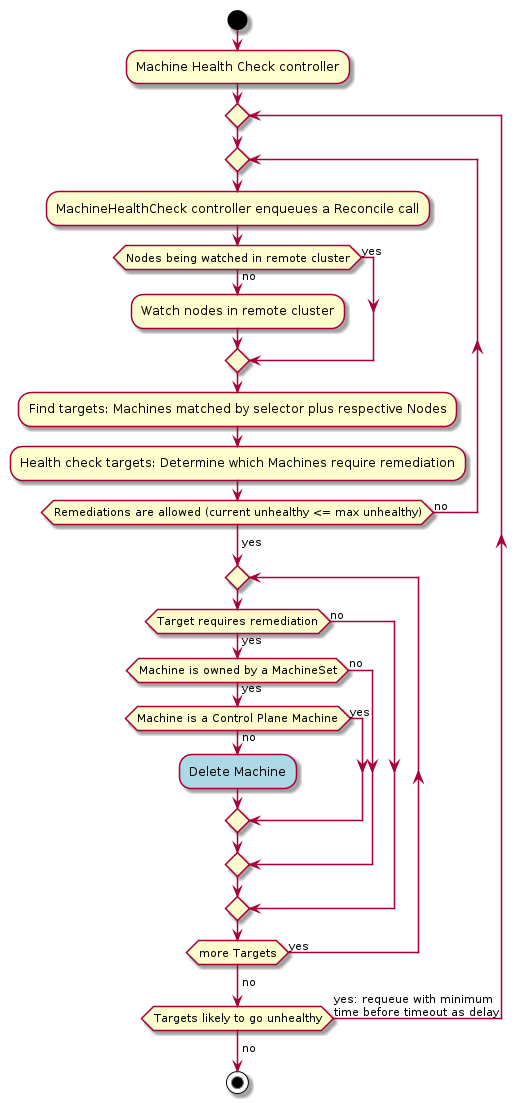

MachineHealthCheck

A MachineHealthCheck defines the conditions when a Node should be considered unhealthy.

If the Node matches these unhealthy conditions for a given user-configured time, the MachineHealthCheck initiates remediation of the Node. Remediation of Nodes is performed by deleting the corresponding Machine.

MachineHealthChecks will only remediate Nodes if they are owned by a MachineSet. This ensures that the Kubernetes cluster does not lose capacity, since the MachineSet will create a new Machine to replace the failed Machine.

BootstrapData

BootstrapData contains the Machine or Node role-specific initialization data (usually cloud-init) used by the Infrastructure Provider to bootstrap a Machine into a Node.

Personas

This document describes the personas for the Cluster API 1.0 project as driven from use cases.

We are marking a “proposed priority for project at this time” per use case. This is not intended to say that these use cases aren’t awesome or important. They are intended to indicate where we, as a project, have received a great deal of interest, and as a result where we think we should invest right now to get the most users for our project. If interest grows in other areas, they will be elevated. And, since this is an open source project, if you want to drive feature development for a less-prioritized persona, we absolutely encourage you to join us and do that.

Use-case driven personas

Service Provider: Managed Kubernetes

Managed Kubernetes is an offering in which a provider is automating the lifecycle management of Kubernetes clusters, including full control planes that are available to, and used directly by, the customer.

Proposed priority for project at this time: High

There are several projects from several companies that are building out proposed managed Kubernetes offerings (Project Pacific’s Kubernetes Service from VMware, Microsoft Azure, Google Cloud, Red Hat) and they have all expressed a desire to use Cluster API. This looks like a good place to make sure Cluster API works well, and then expand to other use cases.

Feature matrix

| Is Cluster API exposed to this user? | Yes |

| Are control plane nodes exposed to this user? | Yes |

| How many clusters are being managed via this user? | Many |

| Who is the CAPI admin in this scenario? | Platform Operator |

| Cloud / On-Prem | Both |

| Upgrade strategies desired? | Need to gather data from users |

| How does this user interact with Cluster API? | API |

| ETCD deployment | Need to gather data from users |

| Does this user have a preference for the control plane running on pods vs. vm vs. something else? | Need to gather data from users |

Service Provider: Kubernetes-as-a-Service

Examples of a Kubernetes-as-a-Service provider include services such as Red Hat’s hosted OpenShift, AKS, GKE, and EKS. The cloud services manage the control plane, often giving those cloud resources away “for free,” and the customers spin up and down their own worker nodes.

Proposed priority for project at this time: Medium

Existing Kubernetes as a Service providers, e.g. AKS, GKE have indicated interest in replacing their off-tree automation with Cluster API, however since they already had to build their own automation and it is currently “getting the job done,” switching to Cluster API is not a top priority for them, although it is desirable.

Feature matrix

| Is Cluster API exposed to this user? | Need to gather data from users |

| Are control plane nodes exposed to this user? | No |

| How many clusters are being managed via this user? | Many |

| Who is the CAPI admin in this scenario? | Platform itself (AKS, GKE, etc.) |

| Cloud / On-Prem | Cloud |

| Upgrade strategies desired? | tear down/replace (need confirmation from platforms) |

| How does this user interact with Cluster API? | API |

| ETCD deployment | Need to gather data from users |

| Does this user have a preference for the control plane running on pods vs. vm vs. something else? | Need to gather data from users |

Cluster API Developer

The Cluster API developer is a developer of Cluster API who needs tools and services to make their development experience more productive and pleasant. It’s also important to take a look at the on-boarding experience for new developers to make sure we’re building out a project that other people can more easily submit patches and features to, to encourage inclusivity and welcome new contributors.

Proposed priority for project at this time: Low

We think we’re in a good place right now, and while we welcome contributions to improve the development experience of the project, it should not be the primary product focus of the open source development team to make development better for ourselves.

Feature matrix

| Is Cluster API exposed to this user? | Yes |

| Are control plane nodes exposed to this user? | Yes |

| How many clusters are being managed via this user? | Many |

| Who is the CAPI admin in this scenario? | Platform Operator |

| Cloud / On-Prem | Both |

| Upgrade strategies desired? | Need to gather data from users |

| How does this user interact with Cluster API? | API |

| ETCD deployment | Need to gather data from users |

| Does this user have a preference for the control plane running on pods vs. vm vs. something else? | Need to gather data from users |

Raw API Consumers

Examples of a raw API consumer is a tool like Prow, a customized enterprise platform built on top of Cluster API, or perhaps an advanced “give me a Kubernetes cluster” button exposing some customization that is built using Cluster API.

Proposed priority for project at this time: Low

Feature matrix

| Is Cluster API exposed to this user? | Yes |

| Are control plane nodes exposed to this user? | Yes |

| How many clusters are being managed via this user? | Many |

| Who is the CAPI admin in this scenario? | Platform Operator |

| Cloud / On-Prem | Both |

| Upgrade strategies desired? | Need to gather data from users |

| How does this user interact with Cluster API? | API |

| ETCD deployment | Need to gather data from users |

| Does this user have a preference for the control plane running on pods vs. vm vs. something else? | Need to gather data from users |

Tooling: Provisioners

Examples of this use case, in which a tooling provisioner is using Cluster API to automate behavior, includes tools such as kops and kubicorn.

Proposed priority for project at this time: Low

Maintainers of tools such as kops have indicated interest in using Cluster API, but they have also indicated they do not have much time to take on the work. If this changes, this use case would increase in priority.

Feature matrix

| Is Cluster API exposed to this user? | Need to gather data from tooling maintainers |

| Are control plane nodes exposed to this user? | Yes |

| How many clusters are being managed via this user? | One (per execution) |

| Who is the CAPI admin in this scenario? | Kubernetes Platform Consumer |

| Cloud / On-Prem | Cloud |

| Upgrade strategies desired? | Need to gather data from users |

| How does this user interact with Cluster API? | CLI |

| ETCD deployment | (Stacked or external) AND new |

| Does this user have a preference for the control plane running on pods vs. vm vs. something else? | Need to gather data from users |

Service Provider: End User/Consumer

This user would be an end user or consumer who is given direct access to Cluster API via their service provider to manage Kubernetes clusters. While there are some commercial projects who plan on doing this (Project Pacific, others), they are doing this as a “super user” feature behind the backdrop of a “Managed Kubernetes” offering.

Proposed priority for project at this time: Low

This is a use case we should keep an eye on to see how people use Cluster API directly, but we think the more relevant use case is people building managed offerings on top at this top.

Feature matrix

| Is Cluster API exposed to this user? | Yes |

| Are control plane nodes exposed to this user? | Yes |

| How many clusters are being managed via this user? | Many |

| Who is the CAPI admin in this scenario? | Platform Operator |

| Cloud / On-Prem | Both |

| Upgrade strategies desired? | Need to gather data from users |

| How does this user interact with Cluster API? | API |

| ETCD deployment | Need to gather data from users |

| Does this user have a preference for the control plane running on pods vs. vm vs. something else? | Need to gather data from users |

Cluster Management Tasks

This section provides details for some of the operations that need to be performed when managing clusters.

Certificate Management

This section details some tasks related to certificate management.

Using Custom Certificates

Cluster API expects certificates and keys used for bootstrapping to follow the below convention. CABPK generates new certificates using this convention if they do not already exist.

Each certificate must be stored in a single secret named one of:

| Name | Type | Example |

|---|---|---|

| [cluster name]-ca | CA | openssl req -x509 -subj “/CN=Kubernetes API” -new -newkey rsa:2048 -nodes -keyout tls.key -sha256 -days 3650 -out tls.crt |

| [cluster name]-etcd | CA | openssl req -x509 -subj “/CN=ETCD CA” -new -newkey rsa:2048 -nodes -keyout tls.key -sha256 -days 3650 -out tls.crt |

| [cluster name]-proxy | CA | openssl req -x509 -subj “/CN=Front-End Proxy” -new -newkey rsa:2048 -nodes -keyout tls.key -sha256 -days 3650 -out tls.crt |

| [cluster name]-sa | Key Pair | openssl genrsa -out tls.key 2048 && openssl rsa -in tls.key -pubout -out tls.crt |

Example

apiVersion: v1

kind: Secret

metadata:

name: cluster1-ca

type: kubernetes.io/tls

data:

tls.crt: <base 64 encoded PEM>

tls.key: <base 64 encoded PEM>

Generating a Kubeconfig with your own CA

-

Create a new Certificate Signing Request (CSR) for the

system:mastersKubernetes role, or specify any other role under CN.openssl req -subj "/CN=system:masters" -new -newkey rsa:2048 -nodes -out admin.csr -keyout admin.key -out admin.csr -

Sign the CSR using the [cluster-name]-ca key:

openssl x509 -req -in admin.csr -CA tls.crt -CAkey tls.key -CAcreateserial -out admin.crt -days 5 -sha256 -

Update your kubeconfig with the sign key:

kubectl config set-credentials cluster-admin --client-certificate=admin.crt --client-key=admin.key --embed-certs=true

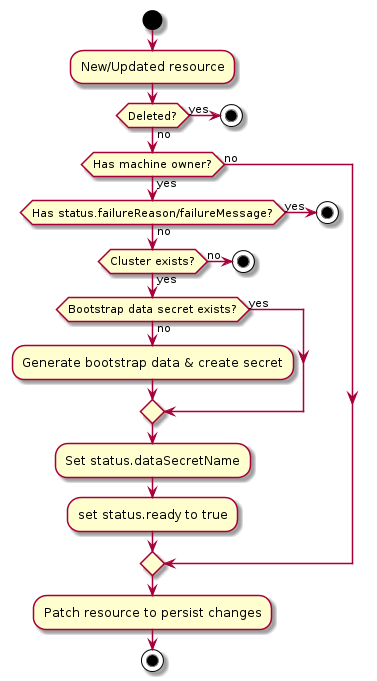

Cluster API bootstrap provider kubeadm

What is the Cluster API bootstrap provider kubeadm?

Cluster API bootstrap provider Kubeadm (CABPK) is a component responsible for generating a cloud-init script to turn a Machine into a Kubernetes Node. This implementation uses kubeadm for Kubernetes bootstrap.

Resources

How does CABPK work?

CABPK is integrated into cluster-api-manager. Assuming you’ve set of CAPI and the Docker Manager, create a Cluster object and its corresponding DockerCluster

infrastructure object.

kind: DockerCluster

apiVersion: infrastructure.cluster.x-k8s.io/v1alpha3

metadata:

name: my-cluster-docker

---

kind: Cluster

apiVersion: cluster.x-k8s.io/v1alpha3

metadata:

name: my-cluster

spec:

infrastructureRef:

kind: DockerCluster

apiVersion: infrastructure.cluster.x-k8s.io/v1alpha3

name: my-cluster-docker

Now you can start creating machines by defining a Machine, its corresponding DockerMachine object, and

the KubeadmConfig bootstrap object.

kind: KubeadmConfig

apiVersion: bootstrap.cluster.x-k8s.io/v1alpha3

metadata:

name: my-control-plane1-config

spec:

initConfiguration:

nodeRegistration:

kubeletExtraArgs:

eviction-hard: nodefs.available<0%,nodefs.inodesFree<0%,imagefs.available<0%

clusterConfiguration:

controllerManager:

extraArgs:

enable-hostpath-provisioner: "true"

---

kind: DockerMachine

apiVersion: infrastructure.cluster.x-k8s.io/v1alpha3

metadata:

name: my-control-plane1-docker

---

kind: Machine

apiVersion: cluster.x-k8s.io/v1alpha3

metadata:

name: my-control-plane1

labels:

cluster.x-k8s.io/cluster-name: my-cluster

cluster.x-k8s.io/control-plane: "true"

set: controlplane

spec:

bootstrap:

configRef:

kind: KubeadmConfig

apiVersion: bootstrap.cluster.x-k8s.io/v1alpha3

name: my-control-plane1-config

infrastructureRef:

kind: DockerMachine

apiVersion: infrastructure.cluster.x-k8s.io/v1alpha3

name: my-control-plane1-docker

version: "v1.19.1"

CABPK’s main responsibility is to convert a KubeadmConfig bootstrap object into a cloud-init script that is

going to turn a Machine into a Kubernetes Node using kubeadm.

The cloud-init script will be saved into a secret KubeadmConfig.Status.DataSecretName and then the infrastructure provider

(CAPD in this example) will pick up this value and proceed with the machine creation and the actual bootstrap.

KubeadmConfig objects

The KubeadmConfig object allows full control of Kubeadm init/join operations by exposing raw InitConfiguration,

ClusterConfiguration and JoinConfiguration objects.

CABPK will fill in some values if they are left empty with sensible defaults:

KubeadmConfig field | Default |

|---|---|

clusterConfiguration.KubernetesVersion | Machine.Spec.Version[1] |

clusterConfiguration.clusterName | Cluster.metadata.name |

clusterConfiguration.controlPlaneEndpoint | Cluster.status.apiEndpoints[0] |

clusterConfiguration.networking.dnsDomain | Cluster.spec.clusterNetwork.serviceDomain |

clusterConfiguration.networking.serviceSubnet | Cluster.spec.clusterNetwork.service.cidrBlocks[0] |

clusterConfiguration.networking.podSubnet | Cluster.spec.clusterNetwork.pods.cidrBlocks[0] |

joinConfiguration.discovery | a short lived BootstrapToken generated by CABPK |

IMPORTANT! overriding above defaults could lead to broken Clusters.

[1] if both clusterConfiguration.KubernetesVersion and Machine.Spec.Version are empty, the latest Kubernetes

version will be installed (as defined by the default kubeadm behavior).

Examples

Valid combinations of configuration objects are:

- for KCP,

InitConfigurationandClusterConfigurationfor the first control plane node;JoinConfigurationfor additional control plane nodes - for machine deployments,

JoinConfigurationfor worker nodes

Bootstrap control plane node:

kind: KubeadmConfig

apiVersion: bootstrap.cluster.x-k8s.io/v1alpha3

metadata:

name: my-control-plane1-config

spec:

initConfiguration:

nodeRegistration:

kubeletExtraArgs:

eviction-hard: nodefs.available<0%,nodefs.inodesFree<0%,imagefs.available<0%

clusterConfiguration:

controllerManager:

extraArgs:

enable-hostpath-provisioner: "true"

Additional control plane nodes:

kind: KubeadmConfig

apiVersion: bootstrap.cluster.x-k8s.io/v1alpha3

metadata:

name: my-control-plane2-config

spec:

joinConfiguration:

nodeRegistration:

kubeletExtraArgs:

eviction-hard: nodefs.available<0%,nodefs.inodesFree<0%,imagefs.available<0%

controlPlane: {}

worker nodes:

kind: KubeadmConfig

apiVersion: bootstrap.cluster.x-k8s.io/v1alpha3

metadata:

name: my-worker1-config

spec:

joinConfiguration:

nodeRegistration:

kubeletExtraArgs:

eviction-hard: nodefs.available<0%,nodefs.inodesFree<0%,imagefs.available<0%

Bootstrap Orchestration

CABPK supports multiple control plane machines initing at the same time. The generation of cloud-init scripts of different machines is orchestrated in order to ensure a cluster bootstrap process that will be compliant with the correct Kubeadm init/join sequence. More in detail:

- cloud-config-data generation starts only after

Cluster.Status.InfrastructureReadyflag is set totrue. - at this stage, cloud-config-data will be generated for the first control plane machine only, keeping on hold additional control plane machines existing in the cluster, if any (kubeadm init).

- after the

ControlPlaneInitializedconditions on the cluster object is set to true, the cloud-config-data for all the other machines are generated (kubeadm join/join —control-plane).

Certificate Management

The user can choose two approaches for certificate management:

- provide required certificate authorities (CAs) to use for

kubeadm init/kubeadm join --control-plane; such CAs should be provided as aSecretsobjects in the management cluster. - let KCP to generate the necessary

Secretsobjects with a self-signed certificate authority for kubeadm

See here for more info about certificate management with kubeadm.

Additional Features

The KubeadmConfig object supports customizing the content of the config-data. The following examples illustrate how to specify these options. They should be adapted to fit your environment and use case.

-

KubeadmConfig.Filesspecifies additional files to be created on the machine, either with content inline or by referencing a secret.files: - contentFrom: secret: key: node-cloud.json name: ${CLUSTER_NAME}-md-0-cloud-json owner: root:root path: /etc/kubernetes/cloud.json permissions: "0644" - path: /etc/kubernetes/cloud.json owner: "root:root" permissions: "0644" content: | { "cloud": "CustomCloud" } -

KubeadmConfig.PreKubeadmCommandsspecifies a list of commands to be executed beforekubeadm init/joinpreKubeadmCommands: - hostname "{{ ds.meta_data.hostname }}" - echo "{{ ds.meta_data.hostname }}" >/etc/hostname -

KubeadmConfig.PostKubeadmCommandssame as above, but afterkubeadm init/joinpostKubeadmCommands: - echo "success" >/var/log/my-custom-file.log -

KubeadmConfig.Usersspecifies a list of users to be created on the machineusers: - name: capiuser sshAuthorizedKeys: - '${SSH_AUTHORIZED_KEY}' sudo: ALL=(ALL) NOPASSWD:ALL -

KubeadmConfig.NTPspecifies NTP settings for the machinentp: servers: - IP_ADDRESS enabled: true -

KubeadmConfig.DiskSetupspecifies options for the creation of partition tables and file systems on devices.diskSetup: filesystems: - device: /dev/disk/azure/scsi1/lun0 extraOpts: - -E - lazy_itable_init=1,lazy_journal_init=1 filesystem: ext4 label: etcd_disk - device: ephemeral0.1 filesystem: ext4 label: ephemeral0 replaceFS: ntfs partitions: - device: /dev/disk/azure/scsi1/lun0 layout: true overwrite: false tableType: gpt -

KubeadmConfig.Mountsspecifies a list of mount points to be setup.mounts: - - LABEL=etcd_disk - /var/lib/etcddisk -

KubeadmConfig.Verbosityspecifies thekubeadmlog level verbosityverbosity: 10 -

KubeadmConfig.UseExperimentalRetryJoinreplaces a basic kubeadm command with a shell script with retries for joins. This will add about 40KB to userdata.useExperimentalRetryJoin: true

For more information on cloud-init options, see cloud config examples.

Upgrading management and workload clusters

Considerations

Supported versions of Kubernetes

If you are upgrading the version of Kubernetes for a cluster managed by Cluster API, check that the running version of Cluster API on the Management Cluster supports the target Kubernetes version.

You may need to upgrade the version of Cluster API in order to support the target Kubernetes version.

In addition, you must always upgrade between Kubernetes minor versions in sequence, e.g. if you need to upgrade from Kubernetes v1.17 to v1.19, you must first upgrade to v1.18.

Images

For kubeadm based clusters, infrastructure providers require a “machine image” containing pre-installed, matching

versions of kubeadm and kubelet, ensure that relevant infrastructure machine templates reference the appropriate

image for the Kubernetes version.

Upgrading using Cluster API

The high level steps to fully upgrading a cluster are to first upgrade the control plane and then upgrade the worker machines.

Upgrading the control plane machines

How to upgrade the underlying machine image

To upgrade the control plane machines underlying machine images, the MachineTemplate resource referenced by the

KubeadmControlPlane must be changed. Since MachineTemplate resources are immutable, the recommended approach is to

- Copy the existing

MachineTemplate. - Modify the values that need changing, such as instance type or image ID.

- Create the new

MachineTemplateon the management cluster. - Modify the existing

KubeadmControlPlaneresource to reference the newMachineTemplateresource in theinfrastructureReffield.

The next step will trigger a rolling update of the control plane using the new values found in the new MachineTemplate.

How to upgrade the Kubernetes control plane version

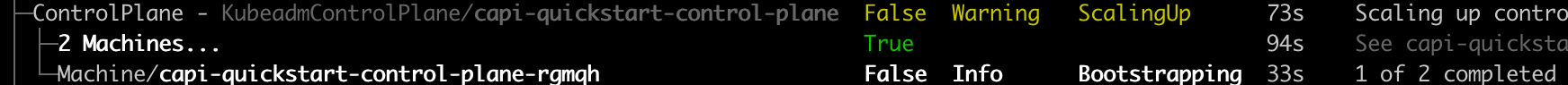

To upgrade the Kubernetes control plane version make a modification to the KubeadmControlPlane resource’s Spec.Version field. This will trigger a rolling upgrade of the control plane and, depending on the provider, also upgrade the underlying machine image.

Some infrastructure providers, such as AWS, require

that if a specific machine image is specified, it has to match the Kubernetes version specified in the

KubeadmControlPlane spec. In order to only trigger a single upgrade, the new MachineTemplate should be created first

and then both the Version and InfrastructureTemplate should be modified in a single transaction.

How to schedule a machine rollout

A KubeadmControlPlane resource has a field RolloutAfter that can be set to a timestamp

(RFC-3339) after which a rollout should be triggered regardless of whether there were any changes

to the KubeadmControlPlane.Spec or not. This would roll out replacement control plane nodes

which can be useful e.g. to perform certificate rotation, reflect changes to machine templates,

move to new machines, etc.

Note that this field can only be used for triggering a rollout, not for delaying one. Specifically,

a rollout can also happen before the time specified in RolloutAfter if any changes are made to

the spec before that time.

To do the same for machines managed by a MachineDeployment it’s enough to make an arbitrary

change to its Spec.Template, one common approach is to run:

clusterctl alpha rollout restart machinedeployment/my-md-0

This will modify the template by setting an cluster.x-k8s.io/restartedAt annotation which will

trigger a rollout.

Upgrading machines managed by a MachineDeployment

Upgrades are not limited to just the control plane. This section is not related to Kubeadm control plane specifically, but is the final step in fully upgrading a Cluster API managed cluster.

It is recommended to manage machines with one or more MachineDeployments. MachineDeployments will

transparently manage MachineSets and Machines to allow for a seamless scaling experience. A modification to the

MachineDeployments spec will begin a rolling update of the machines. Follow

these instructions for changing the

template for an existing MachineDeployment.

MachineDeployments support different strategies for rolling out changes to Machines:

- RollingUpdate

Changes are rolled out by honouring MaxUnavailable and MaxSurge values.

Only values allowed are of type Int or Strings with an integer and percentage symbol e.g “5%”.

- OnDelete

Changes are rolled out driven by the user or any entity deleting the old Machines. Only when a Machine is fully deleted a new one will come up.

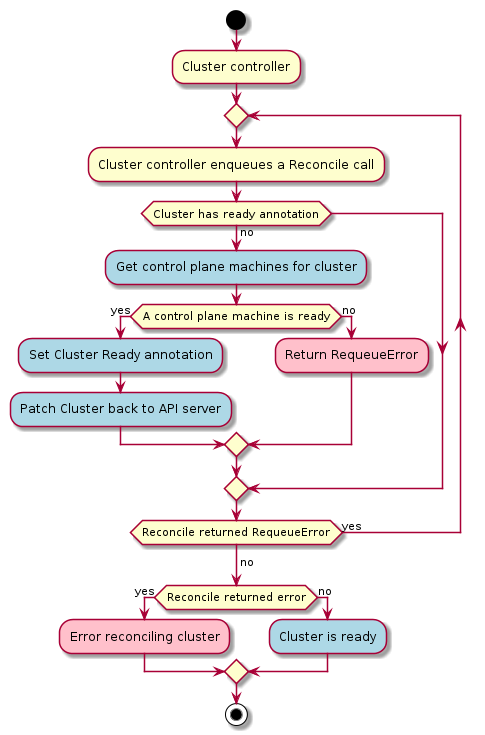

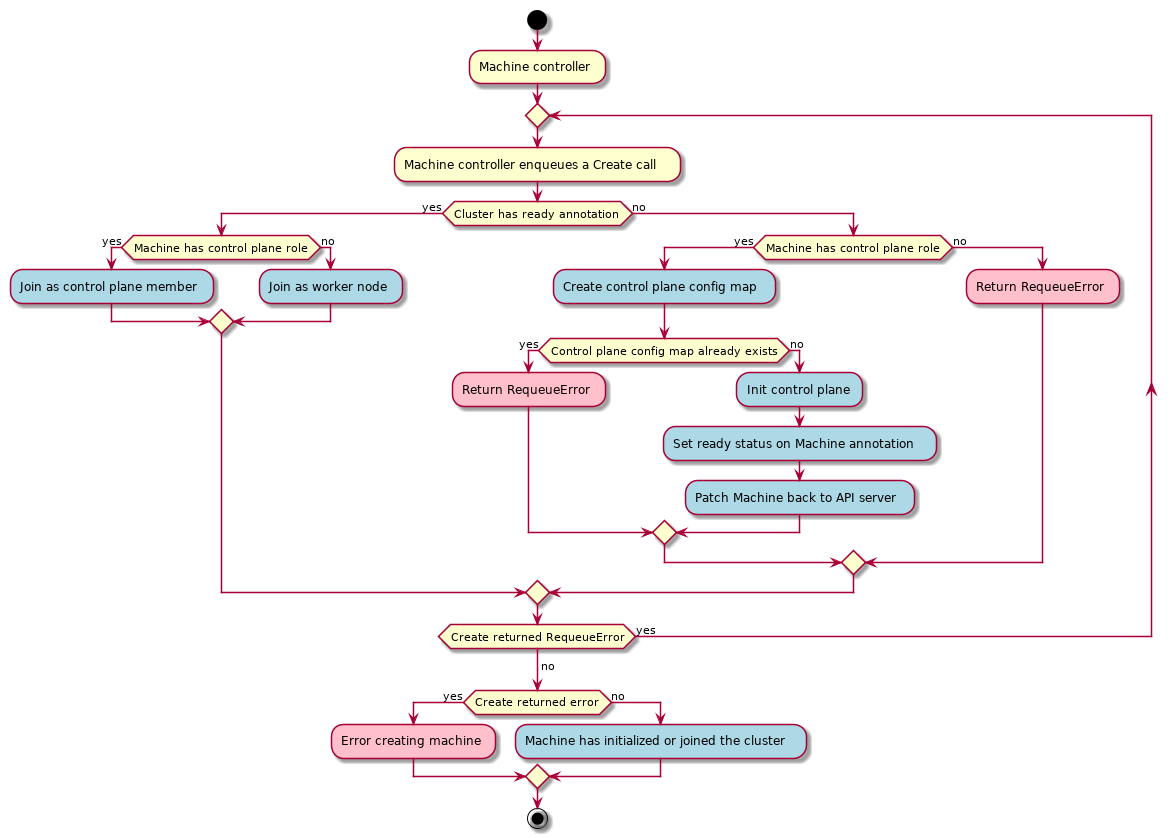

For a more in-depth look at how MachineDeployments manage scaling events, take a look at the MachineDeployment

controller documentation and the MachineSet controller

documentation.

Upgrading Cluster API components

When to upgrade

In general, it’s recommended to upgrade to the latest version of Cluster API to take advantage of bug fixes, new features and improvements.

Considerations

If moving between different API versions, there may be additional tasks that you need to complete. See below for instructions moving between v1alpha3 and v1alpha4.

Ensure that the version of Cluster API is compatible with the Kubernetes version of the management cluster.

Upgrading to newer versions of 0.4.x

Use clusterctl to upgrade between versions of Cluster API 0.4.x.

Upgrading from Cluster API v1alpha3 (0.3.x) to Cluster API v1alpha4 (0.4.x)

For detailed information about the changes from v1alpha3 to v1alpha4, please refer to the Cluster API v1alpha3 compared to v1alpha4 section.

Use clusterctl to upgrade from Cluster API v0.3.x to Cluster API 0.4.x.

You should now be able to manage your resources using the v1alpha4 version of the Cluster API components.

Configure a MachineHealthCheck

Prerequisites

Before attempting to configure a MachineHealthCheck, you should have a working management cluster with at least one MachineDeployment or MachineSet deployed.

What is a MachineHealthCheck?

A MachineHealthCheck is a resource within the Cluster API which allows users to define conditions under which Machines within a Cluster should be considered unhealthy. A MachineHealthCheck is defined on a management cluster and scoped to a particular workload cluster.

When defining a MachineHealthCheck, users specify a timeout for each of the conditions that they define to check on the Machine’s Node. If any of these conditions are met for the duration of the timeout, the Machine will be remediated. By default, the action of remediating a Machine should trigger a new Machine to be created to replace the failed one, but providers are allowed to plug in more sophisticated external remediation solutions.

Creating a MachineHealthCheck

Use the following example as a basis for creating a MachineHealthCheck for worker nodes:

apiVersion: cluster.x-k8s.io/v1alpha3

kind: MachineHealthCheck

metadata:

name: capi-quickstart-node-unhealthy-5m

spec:

# clusterName is required to associate this MachineHealthCheck with a particular cluster

clusterName: capi-quickstart

# (Optional) maxUnhealthy prevents further remediation if the cluster is already partially unhealthy

maxUnhealthy: 40%

# (Optional) nodeStartupTimeout determines how long a MachineHealthCheck should wait for

# a Node to join the cluster, before considering a Machine unhealthy.

# Defaults to 10 minutes if not specified.

# Set to 0 to disable the node startup timeout.

# Disabling this timeout will prevent a Machine from being considered unhealthy when

# the Node it created has not yet registered with the cluster. This can be useful when

# Nodes take a long time to start up or when you only want condition based checks for

# Machine health.

nodeStartupTimeout: 10m

# selector is used to determine which Machines should be health checked

selector:

matchLabels:

nodepool: nodepool-0

# Conditions to check on Nodes for matched Machines, if any condition is matched for the duration of its timeout, the Machine is considered unhealthy

unhealthyConditions:

- type: Ready

status: Unknown

timeout: 300s

- type: Ready

status: "False"

timeout: 300s

Use this example as the basis for defining a MachineHealthCheck for control plane nodes managed via the KubeadmControlPlane:

apiVersion: cluster.x-k8s.io/v1alpha3

kind: MachineHealthCheck

metadata:

name: capi-quickstart-kcp-unhealthy-5m

spec:

clusterName: capi-quickstart

maxUnhealthy: 100%

selector:

matchLabels:

cluster.x-k8s.io/control-plane: ""

unhealthyConditions:

- type: Ready

status: Unknown

timeout: 300s

- type: Ready

status: "False"

timeout: 300s

Remediation Short-Circuiting

To ensure that MachineHealthChecks only remediate Machines when the cluster is healthy,

short-circuiting is implemented to prevent further remediation via the maxUnhealthy and unhealthyRange fields within the MachineHealthCheck spec.

Max Unhealthy

If the user defines a value for the maxUnhealthy field (either an absolute number or a percentage of the total Machines checked by this MachineHealthCheck),

before remediating any Machines, the MachineHealthCheck will compare the value of maxUnhealthy with the number of Machines it has determined to be unhealthy.

If the number of unhealthy Machines exceeds the limit set by maxUnhealthy, remediation will not be performed.

With an Absolute Value

If maxUnhealthy is set to 2:

- If 2 or fewer nodes are unhealthy, remediation will be performed

- If 3 or more nodes are unhealthy, remediation will not be performed

These values are independent of how many Machines are being checked by the MachineHealthCheck.

With Percentages

If maxUnhealthy is set to 40% and there are 25 Machines being checked:

- If 10 or fewer nodes are unhealthy, remediation will be performed

- If 11 or more nodes are unhealthy, remediation will not be performed

If maxUnhealthy is set to 40% and there are 6 Machines being checked:

- If 2 or fewer nodes are unhealthy, remediation will be performed

- If 3 or more nodes are unhealthy, remediation will not be performed

Note, when the percentage is not a whole number, the allowed number is rounded down.

Unhealthy Range

If the user defines a value for the unhealthyRange field (bracketed values that specify a start and an end value), before remediating any Machines,

the MachineHealthCheck will check if the number of Machines it has determined to be unhealthy is within the range specified by unhealthyRange.

If it is not within the range set by unhealthyRange, remediation will not be performed.

With a range of values

If unhealthyRange is set to [3-5] and there are 10 Machines being checked:

- If 2 or fewer nodes are unhealthy, remediation will not be performed.

- If 5 or more nodes are unhealthy, remediation will not be performed.

- In all other cases, remediation will be performed.

Note, the above example had 10 machines as sample set. But, this would work the same way for any other number. This is useful for dynamically scaling clusters where the number of machines keep changing frequently.

Skipping Remediation

There are scenarios where remediation for a machine may be undesirable (eg. during cluster migration using clustrctl move). For such cases, MachineHealthCheck provides 2 mechanisms to skip machines for remediation.

Implicit skipping when the resource is paused (using cluster.x-k8s.io/paused annotation):

- When a cluster is paused, none of the machines in that cluster are considered for remediation.

- When a machine is paused, only that machine is not considered for remediation.

- A cluster or a machine is usually paused automatically by Cluster API when it detects a migration.

Explicit skipping using cluster.x-k8s.io/skip-remediation annotation:

- Users can also skip any machine for remediation by setting the

cluster.x-k8s.io/skip-remediationfor that machine.

Limitations and Caveats of a MachineHealthCheck

Before deploying a MachineHealthCheck, please familiarise yourself with the following limitations and caveats:

- Only Machines owned by a MachineSet or a KubeadmControlPlane can be remediated by a MachineHealthCheck (since a MachineDeployment uses a MachineSet, then this includes Machines that are part of a MachineDeployment)

- Machines managed by a KubeadmControlPlane are remediated according to the delete-and-recreate guidelines described in the KubeadmControlPlane proposal

- If the Node for a Machine is removed from the cluster, a MachineHealthCheck will consider this Machine unhealthy and remediate it immediately

- If no Node joins the cluster for a Machine after the

NodeStartupTimeout, the Machine will be remediated - If a Machine fails for any reason (if the FailureReason is set), the Machine will be remediated immediately

Kubeadm control plane

Using the Kubeadm control plane type to manage a control plane provides several ways to upgrade control plane machines.

Kubeconfig management

KCP will generate and manage the admin Kubeconfig for clusters. The client certificate for the admin user is created with a valid lifespan of a year, and will be automatically regenerated when the cluster is reconciled and has less than 6 months of validity remaining.

Upgrades

See the section on upgrading clusters.

Using Kubeadm Control Plane when upgrading from Cluster API v1alpha2 (0.2.x)

See the section on Adopting existing machines into KubeadmControlPlane management

Running workloads on control plane machines

We don’t suggest running workloads on control planes, and highly encourage avoiding it unless absolutely necessary.

However, in the case the user wants to run non-control plane workloads on control plane machines they are ultimately responsible for ensuring the proper functioning of those workloads, given that KCP is not aware of the specific requirements for each type of workload (e.g. preserving quorum, shutdown procedures etc.).

In order to do so, the user could leverage on the same assumption that applies to all the Cluster API Machines:

- The Kubernetes node hosted on the Machine will be cordoned & drained before removal (with well known exceptions like full Cluster deletion).

- The Machine will respect PreDrainDeleteHook and PreTerminateDeleteHook. see the Machine Deletion Phase Hooks proposal for additional details.

Updating Machine Infrastructure and Bootstrap Templates

Updating Infrastructure Machine Templates

Several different components of Cluster API leverage infrastructure machine templates,

including KubeadmControlPlane, MachineDeployment, and MachineSet. These

MachineTemplate resources should be immutable, unless the infrastructure provider

documentation indicates otherwise for certain fields (see below for more details).

The correct process for modifying an infrastructure machine template is as follows:

- Duplicate an existing template.

Users can use

kubectl get <MachineTemplateType> <name> -o yaml > file.yamlto retrieve a template configuration from a running cluster to serve as a starting point. - Update the desired fields. Fields that might need to be modified could include the SSH key, the AWS instance type, or the Azure VM size. Refer to the provider-specific documentation for more details on the specific fields that each provider requires or accepts.

- Give the newly-modified template a new name by modifying the

metadata.namefield (or by usingmetadata.generateName). - Create the new infrastructure machine template on the API server using

kubectl. (If the template was initially created using the command in step 1, be sure to clear out any extraneous metadata, including theresourceVersionfield, before trying to send it to the API server.)

Once the new infrastructure machine template has been persisted, users may modify

the object that was referencing the infrastructure machine template. For example,

to modify the infrastructure machine template for the KubeadmControlPlane object,

users would modify the spec.infrastructureTemplate.name field. For a MachineDeployment

or MachineSet, users would need to modify the spec.template.spec.infrastructureRef.name

field. In all cases, the name field should be updated to point to the newly-modified

infrastructure machine template. This will trigger a rolling update. (This same process

is described in the documentation for upgrading the underlying machine image for

KubeadmControlPlane in the “How to upgrade the underlying

machine image” section.)

Some infrastructure providers may, at their discretion, choose to support in-place modifications of certain infrastructure machine template fields. This may be useful if an infrastructure provider is able to make changes to running instances/machines, such as updating allocated memory or CPU capacity. In such cases, however, Cluster API will not trigger a rolling update.

Updating Bootstrap Templates

Several different components of Cluster API leverage bootstrap templates,

including MachineDeployment, and MachineSet. When used in MachineDeployment or

MachineSet changes to those templates do not trigger rollouts of already existing Machines.

New Machines are created based on the current version of the bootstrap template.

The correct process for modifying a bootstrap template is as follows:

- Duplicate an existing template.

Users can use

kubectl get <BootstrapTemplateType> <name> -o yaml > file.yamlto retrieve a template configuration from a running cluster to serve as a starting point. - Update the desired fields.

- Give the newly-modified template a new name by modifying the

metadata.namefield (or by usingmetadata.generateName). - Create the new bootstrap template on the API server using

kubectl. (If the template was initially created using the command in step 1, be sure to clear out any extraneous metadata, including theresourceVersionfield, before trying to send it to the API server.)

Once the new bootstrap template has been persisted, users may modify

the object that was referencing the bootstrap template. For example,

to modify the bootstrap template for the MachineDeployment object,

users would modify the spec.template.spec.bootstrap.configRef.name field.

The name field should be updated to point to the newly-modified

bootstrap template. This will trigger a rolling update.

Using the Cluster Autoscaler

Cluster Autoscaler is a tool that automatically adjusts the size of the Kubernetes cluster based on the utilization of Pods and Nodes in your cluster. For more general information about the Cluster Autoscaler, please see the project documentation.

The following instructions are a reproduction of the Cluster API provider specific documentation from the Autoscaler project documentation.

Cluster Autoscaler on Cluster API

The cluster autoscaler on Cluster API uses the cluster-api project to manage the provisioning and de-provisioning of nodes within a Kubernetes cluster.

Kubernetes Version

The cluster-api provider requires Kubernetes v1.16 or greater to run the v1alpha3 version of the API.

Starting the Autoscaler

To enable the Cluster API provider, you must first specify it in the command line arguments to the cluster autoscaler binary. For example:

cluster-autoscaler --cloud-provider=clusterapi

Please note, this example only shows the cloud provider options, you will

most likely need other command line flags. For more information you can invoke

cluster-autoscaler --help to see a full list of options.

Configuring node group auto discovery

If you do not configure node group auto discovery, cluster autoscaler will attempt to match nodes against any scalable resources found in any namespace and belonging to any Cluster.

Limiting cluster autoscaler to only match against resources in the blue namespace

--node-group-auto-discovery=clusterapi:namespace=blue

Limiting cluster autoscaler to only match against resources belonging to Cluster test1

--node-group-auto-discovery=clusterapi:clusterName=test1

Limiting cluster autoscaler to only match against resources matching the provided labels

--node-group-auto-discovery=clusterapi:color=green,shape=square

These can be mixed and matched in any combination, for example to only match resources in the staging namespace, belonging to the purple cluster, with the label owner=jim:

--node-group-auto-discovery=clusterapi:namespace=staging,clusterName=purple,owner=jim

Connecting cluster-autoscaler to Cluster API management and workload Clusters

You will also need to provide the path to the kubeconfig(s) for the management

and workload cluster you wish cluster-autoscaler to run against. To specify the

kubeconfig path for the workload cluster to monitor, use the --kubeconfig

option and supply the path to the kubeconfig. If the --kubeconfig option is

not specified, cluster-autoscaler will attempt to use an in-cluster configuration.

To specify the kubeconfig path for the management cluster to monitor, use the

--cloud-config option and supply the path to the kubeconfig. If the

--cloud-config option is not specified it will fall back to using the kubeconfig

that was provided with the --kubeconfig option.

Autoscaler running in a joined cluster using service account credentials

+-----------------+

| mgmt / workload |

| --------------- |

| autoscaler |

+-----------------+

Use in-cluster config for both management and workload cluster:

cluster-autoscaler --cloud-provider=clusterapi

Autoscaler running in workload cluster using service account credentials, with separate management cluster

+--------+ +------------+

| mgmt | | workload |

| | cloud-config | ---------- |

| |<-------------+ autoscaler |

+--------+ +------------+

Use in-cluster config for workload cluster, specify kubeconfig for management cluster:

cluster-autoscaler --cloud-provider=clusterapi \

--cloud-config=/mnt/kubeconfig

Autoscaler running in management cluster using service account credentials, with separate workload cluster

+------------+ +----------+

| mgmt | | workload |

| ---------- | kubeconfig | |

| autoscaler +------------>| |

+------------+ +----------+

Use in-cluster config for management cluster, specify kubeconfig for workload cluster:

cluster-autoscaler --cloud-provider=clusterapi \

--kubeconfig=/mnt/kubeconfig \

--clusterapi-cloud-config-authoritative

Autoscaler running anywhere, with separate kubeconfigs for management and workload clusters

+--------+ +------------+ +----------+

| mgmt | | ? | | workload |

| | cloud-config | ---------- | kubeconfig | |

| |<--------------+ autoscaler +------------>| |

+--------+ +------------+ +----------+

Use separate kubeconfigs for both management and workload cluster:

cluster-autoscaler --cloud-provider=clusterapi \

--kubeconfig=/mnt/workload.kubeconfig \

--cloud-config=/mnt/management.kubeconfig

Autoscaler running anywhere, with a common kubeconfig for management and workload clusters

+---------------+ +------------+

| mgmt/workload | | ? |

| | kubeconfig | ---------- |

| |<------------+ autoscaler |

+---------------+ +------------+

Use a single provided kubeconfig for both management and workload cluster:

cluster-autoscaler --cloud-provider=clusterapi \

--kubeconfig=/mnt/workload.kubeconfig

Enabling Autoscaling

To enable the automatic scaling of components in your cluster-api managed cloud there are a few annotations you need to provide. These annotations must be applied to either MachineSet or MachineDeployment resources depending on the type of cluster-api mechanism that you are using.

There are two annotations that control how a cluster resource should be scaled:

-

cluster.x-k8s.io/cluster-api-autoscaler-node-group-min-size- This specifies the minimum number of nodes for the associated resource group. The autoscaler will not scale the group below this number. Please note that currently the cluster-api provider will not scale down to zero nodes. -

cluster.x-k8s.io/cluster-api-autoscaler-node-group-max-size- This specifies the maximum number of nodes for the associated resource group. The autoscaler will not scale the group above this number.

The autoscaler will monitor any MachineSet or MachineDeployment containing

both of these annotations.

Specifying a Custom Resource Group

By default all Kubernetes resources consumed by the Cluster API provider will

use the group cluster.x-k8s.io, with a dynamically acquired version. In

some situations, such as testing or prototyping, you may wish to change this

group variable. For these situations you may use the environment variable

CAPI_GROUP to change the group that the provider will use.

Please note that setting the CAPI_GROUP environment variable will also cause the

annotations for minimum and maximum size to change.

This behavior will also affect the machine annotation on nodes, the machine deletion annotation,

and the cluster name label. For example, if CAPI_GROUP=test.k8s.io

then the minimum size annotation key will be test.k8s.io/cluster-api-autoscaler-node-group-min-size,

the machine annotation on nodes will be test.8s.io/machine, the machine deletion

annotation will be test.k8s.io/delete-machine, and the cluster name label will be

test.k8s.io/cluster-name.

Specifying a Custom Resource Version

When determining the group version for the Cluster API types, by default the autoscaler

will look for the latest version of the group. For example, if MachineDeployments

exist in the cluster.x-k8s.io group at versions v1alpha1 and v1beta1, the

autoscaler will choose v1beta1.

In some cases it may be desirable to specify which version of the API the cluster autoscaler should use. This can be useful in debugging scenarios, or in situations where you have deployed multiple API versions and wish to ensure that the autoscaler uses a specific version.

Setting the CAPI_VERSION environment variable will instruct the autoscaler to use

the version specified. This works in a similar fashion as the API group environment

variable with the exception that there is no default value. When this variable is not

set, the autoscaler will use the behavior described above.

Sample manifest

A sample manifest that will create a deployment running the autoscaler is

available. It can be deployed by passing it through envsubst, providing

these environment variables to set the namespace to deploy into as well as the image and tag to use:

export AUTOSCALER_NS=kube-system

export AUTOSCALER_IMAGE=us.gcr.io/k8s-artifacts-prod/autoscaling/cluster-autoscaler:v1.20.0

envsubst < examples/deployment.yaml | kubectl apply -f-

A note on permissions

The cluster-autoscaler-management role for accessing cluster api scalable resources is scoped to ClusterRole.

This may not be ideal for all environments (eg. Multi tenant environments).

In such cases, it is recommended to scope it to a Role mapped to a specific namespace.

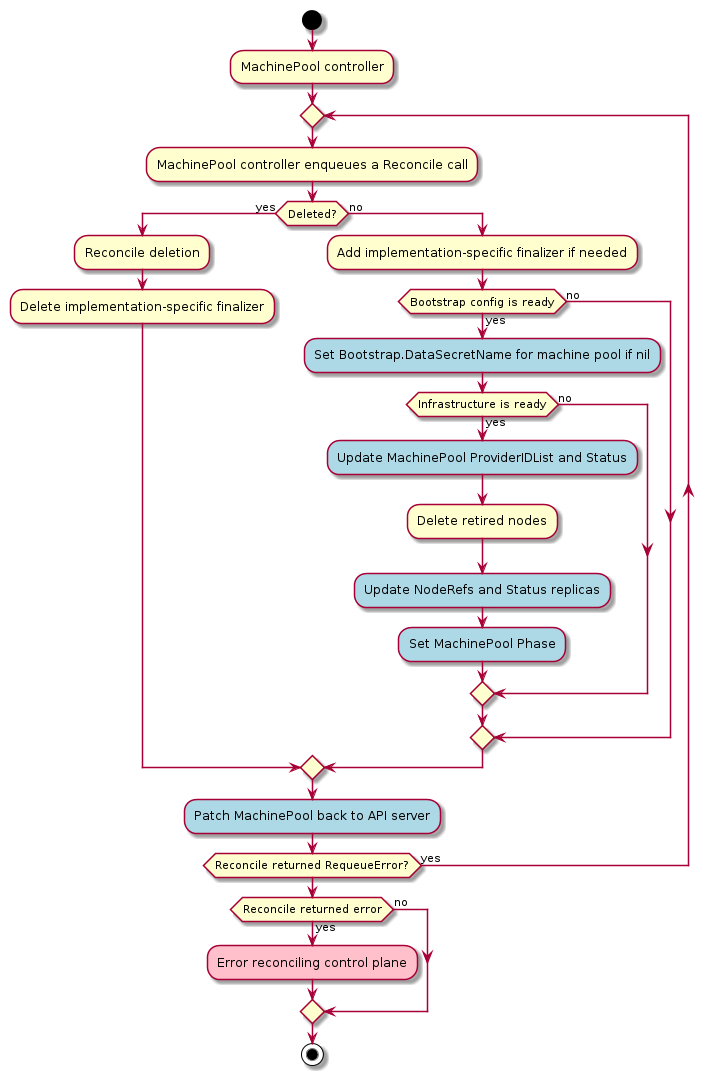

Experimental Features

Cluster API now ships with a new experimental package that lives under the exp/ directory. This is a